乐鱼龙虎斗打不开解决方法_K8s使用新版NFS Provisioner建树Subdir

发布日期:2023-10-30 06:41 点击次数:168

本文转载自微信公众号「运维开导故事」,作家小姜。转载本文请有关运维开导故事公众号。

配景NFS在k8s中当作volume存储仍是莫得什么新奇的了,这个是最简便亦然最容易上手的一种文献存储。最近有一个需求需要在k8s中使用NFS存储,于是记载如下,况且还存在一些骚操作和经由中遭受的坑点,同期记载如下。

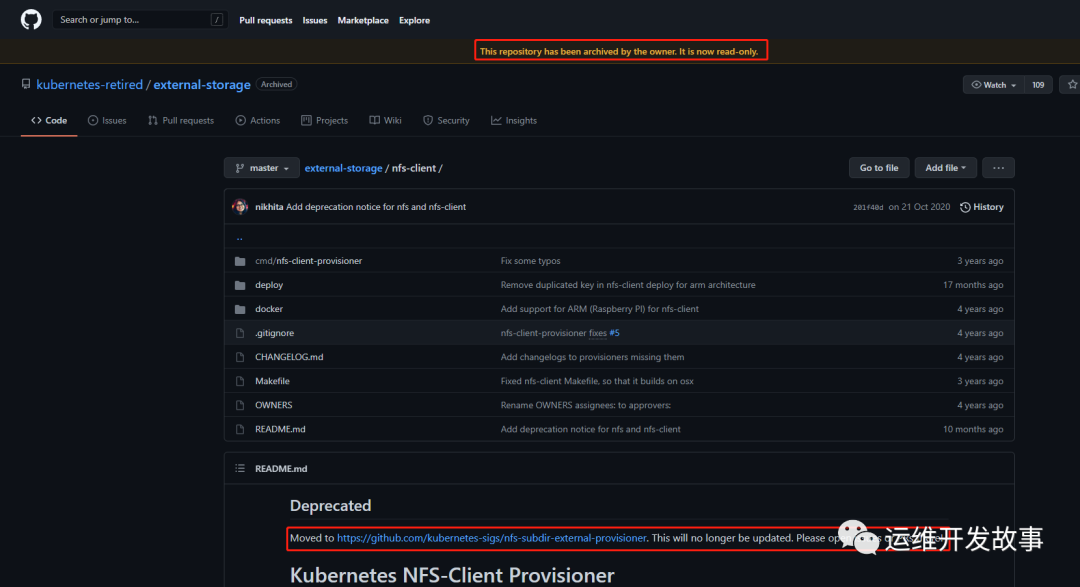

打听nfs provisioner的GitHub仓库会发现他教导你该仓库仍是被个东说念主存档况且情景仍是是只读了。

老的NFS仓库地址:https://github.com/kubernetes-retired/external-storage/tree/master/nfs-client

一位疑似足坛巨星梅西的人物被曝光在皇冠体育上涉足赌博,引起了舆论哗然。皇冠客服系统维护底下Deprecated暗示仓库仍是出动到了另外一个GitHub地址了,也等于说他这个仓库仍是不在更新了,执续更新的仓库是Moved to 后头指定的仓库了。

新仓库地址:https://github.com/lorenzofaresin/nfs-subdir-external-provisioner

澳门威尼斯人娱乐城发现更新的仓库中比拟老仓库多了一个功能:添加了一个参数pathPattern,本体上也等于通过竖立这个参数不错建树PV的子目次。

有效发声,无效倾听?不存在!为了听见更多用户的声音,本次用户聆听日策划之初,集团品牌部就联合NPS用户体验管理部、外部专业调研公司搭建了"平安听你的"线上实时调研互动平台,通过更开放、更便捷的渠道广纳大众之声,累计吸引超20万+用户积极针对保险理赔、健康服务等不同板块发表珍贵意见,也让这些实际的需求反馈被平安高管直接听见。同时,被收集的意见或想法将进一步被平安纳入优化体系,欧博娱乐官网推动自身服务质量持续改善和客户满意度的提升。听得更广,服务也能更优。

zh皇冠现金在线开户

带着敬爱心咱们来部署一下新的NFS,以下yaml建树文献不错在技俩中的deploy目次中找到。我这里的建树凭据我的环境略微作念了鼎新,比如NFS的处事的IP地址。你们凭据本体情况修改成我方的nfs处事器地址和path旅途。

皇冠客服飞机:@seo3687「本次实施在k8s 1.19.0上」

皇冠体育hg86a

class.yaml

$ 威尼斯人骰宝cat class.yaml apiVersion: storage.k8s.io/v1 kind: StorageClass metadata: name: managed-nfs-storage provisioner: k8s-sigs.io/nfs-subdir-external-provisioner # or choose another name, must match deployment's env PROVISIONER_NAME' parameters: archiveOnDelete: "false"

deployment.yaml

打不开解决方法apiVersion: apps/v1 kind: Deployment metadata: name: nfs-client-provisioner labels: app: nfs-client-provisioner # replace with namespace where provisioner is deployed namespace: kube-system spec: replicas: 1 strategy: type: Recreate selector: matchLabels: app: nfs-client-provisioner template: metadata: labels: app: nfs-client-provisioner spec: serviceAccountName: nfs-client-provisioner containers: - name: nfs-client-provisioner image: k8s.gcr.io/sig-storage/nfs-subdir-external-provisioner:v4.0.2 volumeMounts: - name: nfs-client-root mountPath: /persistentvolumes env: - name: PROVISIONER_NAME value: k8s-sigs.io/nfs-subdir-external-provisioner - name: NFS_SERVER value: 172.16.33.4 - name: NFS_PATH value: / volumes: - name: nfs-client-root nfs: server: 172.16.33.4 path: /

rbac.yaml

apiVersion: v1 kind: ServiceAccount metadata: name: nfs-client-provisioner # replace with namespace where provisioner is deployed namespace: kube-system --- kind: ClusterRole apiVersion: rbac.authorization.k8s.io/v1 metadata: name: nfs-client-provisioner-runner rules: - apiGroups: [""] resources: ["nodes"] verbs: ["get", "list", "watch"] - apiGroups: [""] resources: ["persistentvolumes"] verbs: ["get", "list", "watch", "create", "delete"] - apiGroups: [""] resources: ["persistentvolumeclaims"] verbs: ["get", "list", "watch", "update"] - apiGroups: ["storage.k8s.io"] resources: ["storageclasses"] verbs: ["get", "list", "watch"] - apiGroups: [""] resources: ["events"] verbs: ["create", "update", "patch"] --- kind: ClusterRoleBinding apiVersion: rbac.authorization.k8s.io/v1 metadata: name: run-nfs-client-provisioner subjects: - kind: ServiceAccount name: nfs-client-provisioner # replace with namespace where provisioner is deployed namespace: kube-system roleRef: kind: ClusterRole name: nfs-client-provisioner-runner apiGroup: rbac.authorization.k8s.io --- kind: Role apiVersion: rbac.authorization.k8s.io/v1 metadata: name: leader-locking-nfs-client-provisioner # replace with namespace where provisioner is deployed namespace: kube-system rules: - apiGroups: [""] resources: ["endpoints"] verbs: ["get", "list", "watch", "create", "update", "patch"] --- kind: RoleBinding apiVersion: rbac.authorization.k8s.io/v1 metadata: name: leader-locking-nfs-client-provisioner # replace with namespace where provisioner is deployed namespace: kube-system subjects: - kind: ServiceAccount name: nfs-client-provisioner # replace with namespace where provisioner is deployed namespace: kube-system roleRef: kind: Role name: leader-locking-nfs-client-provisioner apiGroup: rbac.authorization.k8s.io

看重:

镜像无法拉取的话不错从外洋机器拉取镜像然后再导入 rbac基本无需鼎新,建树子目次的时分需要鼎新class.yaml文献,后头会说创建整个资源文献

kubectl apply -f class.yaml -f deployment.yaml -f rbac.yaml

通过一个简便的例子来创建pvc

$ cat test-pvc-2.yaml kind: PersistentVolumeClaim apiVersion: v1 metadata: name: test-pvc-2 namespace: nacos spec: storageClassName: "managed-nfs-storage" accessModes: - ReadWriteMany resources: requests: storage: 10Gi $ cat test-nacos-pod-2.yaml apiVersion: apps/v1 kind: StatefulSet metadata: name: nacos-c1-sit-tmp-1 labels: appEnv: sit appName: nacos-c1-sit-tmp-1 namespace: nacos spec: serviceName: nacos-c1-sit-tmp-1 replicas: 3 selector: matchLabels: appEnv: sit appName: nacos-c1-sit-tmp-1 template: metadata: labels: appEnv: sit appName: nacos-c1-sit-tmp-1 spec: dnsPolicy: ClusterFirst containers: - name: nacos image: www.ayunw.cn/library/nacos/nacos-server:1.4.1 ports: - containerPort: 8848 env: - name: NACOS_REPLICAS value: "1" - name: MYSQL_SERVICE_HOST value: mysql.ayunw.cn - name: MYSQL_SERVICE_DB_NAME value: nacos_c1_sit - name: MYSQL_SERVICE_PORT value: "3306" - name: MYSQL_SERVICE_USER value: nacos - name: MYSQL_SERVICE_PASSWORD value: xxxxxxxxx - name: MODE value: cluster - name: NACOS_SERVER_PORT value: "8848" - name: PREFER_HOST_MODE value: hostname - name: SPRING_DATASOURCE_PLATFORM value: mysql - name: TOMCAT_ACCESSLOG_ENABLED value: "true" - name: NACOS_AUTH_ENABLE value: "true" - name: NACOS_SERVERS value: nacos-c1-sit-0.nacos-c1-sit-tmp-1.nacos.svc.cluster.local:8848 nacos-c1-sit-1.nacos-c1-sit-tmp-1.nacos.svc.cluster.local:8848 nacos-c1-sit-2.nacos-c1-sit-tmp-1.nacos.svc.cluster.local:8848 imagePullPolicy: IfNotPresent resources: limits: cpu: 500m memory: 5Gi requests: cpu: 100m memory: 512Mi volumeMounts: - name: data mountPath: /home/nacos/plugins/peer-finder subPath: peer-finder - name: data mountPath: /home/nacos/data subPath: data volumeClaimTemplates: - metadata: name: data spec: storageClassName: "managed-nfs-storage" accessModes: - "ReadWriteMany" resources: requests: storage: 10Gi

查抄pvc以及nfs存储中的数据

# ll total 12 drwxr-xr-x 4 root root 4096 Aug 3 13:30 nacos-data-nacos-c1-sit-tmp-1-0-pvc-90d74547-0c71-4799-9b1c-58d80da51973 drwxr-xr-x 4 root root 4096 Aug 3 13:30 nacos-data-nacos-c1-sit-tmp-1-1-pvc-18b3e220-d7e5-4129-89c4-159d9d9f243b drwxr-xr-x 4 root root 4096 Aug 3 13:31 nacos-data-nacos-c1-sit-tmp-1-2-pvc-26737f88-35cd-42dc-87b6-3b3c78d823da # ll nacos-data-nacos-c1-sit-tmp-1-0-pvc-90d74547-0c71-4799-9b1c-58d80da51973 total 8 drwxr-xr-x 2 root root 4096 Aug 3 13:30 data drwxr-xr-x 2 root root 4096 Aug 3 13:30 peer-finder

不错发现手动创建了一个PVC,况且创建一个nacos的deployment使用这个PVC后仍是自动创建出相应的PV况且与之绑定,且挂载了数据。

建树子目次删除之前创建的class.yaml,添加pathPattern参数,然后重重生成sc

$ kubectl delete -f class.yaml $ vi class.yaml apiVersion: storage.k8s.io/v1 kind: StorageClass metadata: name: managed-nfs-storage provisioner: k8s-sigs.io/nfs-subdir-external-provisioner # or choose another name, must match deployment's env PROVISIONER_NAME' parameters: archiveOnDelete: "false" # 添加以下参数 pathPattern: "${.PVC.namespace}/${.PVC.annotations.nfs.io/storage-path}"

创建pvc来测试生成的PV目次是否生成了子目次

$ cat test-pvc.yaml kind: PersistentVolumeClaim apiVersion: v1 metadata: name: test-pvc-2 namespace: nacos annotations: nfs.io/storage-path: "test-path-two" # not required, depending on whether this annotation was shown in the storage class description spec: storageClassName: "managed-nfs-storage" accessModes: - ReadWriteMany resources: requests: storage: 100Mi

创建资源

筹码kubectl apply -f class.yaml -f test-pvc.yaml

查抄后果

# pwd /data/nfs# ll nacos/ total 4 drwxr-xr-x 2 root root 4096 Aug 3 10:21 nacos-pvc-c1-pro # tree -L 2 . . └── nacos └── nacos-pvc-c1-pro 2 directories, 0 files

在mount了nfs的机器上查抄生成的目次,发现子目次真的仍是生成,况且子目次的层级是以"定名空间/注解称号"为规定的。刚好顺应了上头StorageClass中界说的pathPattern规定。

provisioner高可用坐褥环境中应该尽可能的幸免单点故障,因此此处谈判provisioner的高可用架构 更新后的provisioner建树如下:

乐鱼龙虎斗

$ cat nfs-deployment.yaml apiVersion: apps/v1 kind: Deployment metadata: name: nfs-client-provisioner labels: app: nfs-client-provisioner # replace with namespace where provisioner is deployed namespace: kube-system spec: # 因为要完了高可用,是以建树3个pod副本 replicas: 3 strategy: type: Recreate selector: matchLabels: app: nfs-client-provisioner template: metadata: labels: app: nfs-client-provisioner spec: serviceAccountName: nfs-client-provisioner imagePullSecrets: - name: registry-auth-paas containers: - name: nfs-client-provisioner image: www.ayunw.cn/nfs-subdir-external-provisioner:v4.0.2-31-gcb203b4 imagePullPolicy: IfNotPresent volumeMounts: - name: nfs-client-root mountPath: /persistentvolumes env: - name: PROVISIONER_NAME value: k8s-sigs.io/nfs-subdir-external-provisioner # 竖立高可用允许选举 - name: ENABLE_LEADER_ELECTION value: "True" - name: NFS_SERVER value: 172.16.33.4 - name: NFS_PATH value: / volumes: - name: nfs-client-root nfs: server: 172.16.33.4 path: /

重建资源

kubectl delete -f nfs-class.yaml -f nfs-deployment.yaml kubectl apply -f nfs-class.yaml -f nfs-deployment.yaml

查抄provisioner高可用是否成功

# kubectl get po -n kube-system | grep nfs nfs-client-provisioner-666df4d979-fdl8l 1/1 Running 0 20s nfs-client-provisioner-666df4d979-n54ps 1/1 Running 0 20s nfs-client-provisioner-666df4d979-s4cql 1/1 Running 0 20s # kubectl logs -f --tail=20 nfs-client-provisioner-666df4d979-fdl8l -n kube-system I0803 06:04:41.406441 1 leaderelection.go:242] attempting to acquire leader lease kube-system/nfs-provisioner-baiducfs... ^C # kubectl logs -f --tail=20 -n kube-system nfs-client-provisioner-666df4d979-n54ps I0803 06:04:41.961617 1 leaderelection.go:242] attempting to acquire leader lease kube-system/nfs-provisioner-baiducfs... ^C [root@qing-core-kube-master-srv1 nfs-storage]# kubectl logs -f --tail=20 -n kube-system nfs-client-provisioner-666df4d979-s4cql I0803 06:04:39.574258 1 leaderelection.go:242] attempting to acquire leader lease kube-system/nfs-provisioner-baiducfs... I0803 06:04:39.593388 1 leaderelection.go:252] successfully acquired lease kube-system/nfs-provisioner-baiducfs I0803 06:04:39.593519 1 event.go:278] Event(v1.ObjectReference{Kind:"Endpoints", Namespace:"kube-system", Name:"nfs-provisioner-baiducfs", UID:"3d5cdef6-57da-445e-bcd4-b82d0181fee4", APIVersion:"v1", ResourceVersion:"1471379708", FieldPath:""}): type: 'Normal' reason: 'LeaderElection' nfs-client-provisioner-666df4d979-s4cql_590ac6eb-ccfd-4653-9de5-57015f820b84 became leader I0803 06:04:39.593559 1 controller.go:820] Starting provisioner controller nfs-provisioner-baiducfs_nfs-client-provisioner-666df4d979-s4cql_590ac6eb-ccfd-4653-9de5-57015f820b84! I0803 06:04:39.694505 1 controller.go:869] Started provisioner controller nfs-provisioner-baiducfs_nfs-client-provisioner-666df4d979-s4cql_590ac6eb-ccfd-4653-9de5-57015f820b84!

通过successfully acquired lease kube-system/nfs-provisioner-baiducfs不错看到第三个pod成功被选举为leader节点了,高可用成功。

报错在操作经由中遭受describe pod发现报错如下:

Mounting arguments: --description=Kubernetes transient mount for /data/kubernetes/kubelet/pods/2ca70aa9-433c-4d10-8f87-154ec9569504/volumes/kubernetes.io~nfs/nfs-client-root --scope -- mount -t nfs 172.16.41.7:/data/nfs_storage /data/kubernetes/kubelet/pods/2ca70aa9-433c-4d10-8f87-154ec9569504/volumes/kubernetes.io~nfs/nfs-client-root Output: Running scope as unit: run-rdcc7cfa6560845969628fc551606e69d.scope mount: /data/kubernetes/kubelet/pods/2ca70aa9-433c-4d10-8f87-154ec9569504/volumes/kubernetes.io~nfs/nfs-client-root: bad option; for several filesystems (e.g. nfs, cifs) you might need a /sbin/mount.<type> helper program. Warning FailedMount 10s kubelet, node1.ayunw.cn MountVolume.SetUp failed for volume "nfs-client-root" : mount failed: exit status 32 Mounting command: systemd-run

处理相貌:经排查原因是pod被调遣到的节点上莫得装置nfs客户端,只需要装置一下nfs客户端nfs-utils即可。

五星体育

相关资讯